This post describes how to configure WSO2 APIM 2.1.0 as a JMS Producer and gets a response back as a complete JMS story, using Tibco EMS and WSO2 ESB 5.0.0.

- WSO2 APIM hosts the API, that a client (i.e. cURL) would invoke.

- Tibco EMS acts as the JMS server

- WSO2 APIM 2.1.0 acts as a JMS Producer and once the API in API Manager is invoked by a client, it sends a JMS message to a JMS Queue (in Tibco EMS). We will call this JMS queue as 'Sender Queue- SMSStore'.

- WSO2 ESB 5.0.0 act as the JMS consumer when subscribed and listening to Sender JMS queue.

- WSO2 ESB hosts a proxy service which routes the message to a backend service and send the response from backend service back to a JMS reciever queue.

- WSO2 ESB also acts as JMS a producer when sending back the response recieved from Backend service to a destination JMS queue.

- The backend service we use is a sample service deployed in WSO2 ESB (SimpleStockQuoteService) and it is deployed in the sample Axis2Server embeded within WSO2 ESB.

Figure: Design and Message flow of the setup

Configure and setup APIM

1) Configure JMSSender in <APIM_HOME>/repository/conf/axis2.xml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | <?xml version="1.0" encoding="UTF-8"?> <transportSender name="jms" class="org.apache.axis2.transport.jms.JMSSender"> <parameter locked="false" name="QueueConnectionFactoryAPIM"> <parameter locked="false" name="java.naming.factory.initial">com.tibco.tibjms.naming.TibjmsInitialContextFactory</parameter> <parameter name="java.naming.provider.url">tcp://tibco.server.host.one:7222,tcp://tibco.server.host.two:7222</parameter> <parameter locked="false" name="transport.jms.ConnectionFactoryJNDIName">QueueConnectionFactoryAPIM</parameter> <parameter locked="false" name="transport.jms.JMSSpecVersion">1.0.2b</parameter> <parameter name="transport.jms.MaxJMSConnections">5</parameter> <!-- By default, Axis2 spawns a new thread to handle each outgoing message. To change this behavior, you need to remove the ClientApiNonBlocking property from the message.Removal of this property can be vital when queuing transports like JMS are involved. --> <property action="remove" name="ClientApiNonBlocking" scope="axis2" /> <parameter locked="false" name="transport.jms.ConnectionFactoryType">queue</parameter> <parameter name="transport.jms.DefaultReplyDestinationType" locked="true">queue</parameter> <parameter name="transport.jms.DestinationType" locked="true">queue</parameter> <parameter locked="false" name="transport.jms.UserName">apimuser</parameter> <parameter locked="false" name="transport.jms.Password">12345</parameter> <parameter locked="false" name="transport.jms.CacheLevel">connection</parameter> </parameter> </transportSender> |

2) Copy below Tibco JMS client related jars into <APIM_HOME>/repository/components/extensions/ directory

- jms-2.0.jar

- tibemsd_sec.jar

- tibjms.jar

- tibjmsadmin.jar

- tibjmsapps.jar

- tibjmsufo.jar

- tibrvjms.jar

3) Start the APIM server and create an API, Publish and subscribe to it with an Application. When creating the API, the endpoint of the API should be given as a JMS endpoint which actually points to the JMS queue (i.e. SMSStore), we will be creating later.

JMS endpoint :

"jms:/SMSStore?transport.jms.ConnectionFactory=QueueConnectionFactoryAPIM&transport.jms.ReplyDestination=SMSReceiveNotificationStore"

Note the below properties given in that endpoint address.

- (JMSQueue name): SMSStore D

- Direct endpoint JMS queue of the JMS message. It recieves the JMS messages.

- transport.jms.ConnectionFactory=QueueConnectionFactoryAPIM

Queue ConnectionFactorywhich is used to create aQueueConnection between API Manager and Tibco EMS server.- transport.jms.ReplyDestination=SMSReceiveNotificationStore

- JMS Queue, which recieves the response related to this request

Below I have mentioned a section of synapse API file created. ( related API resource that has our JMS endpoint )

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 | <?xml version="1.0" encoding="UTF-8"?> <resource methods="POST" url-mapping="/b" faultSequence="fault"> <inSequence> <property name="api.ut.backendRequestTime" expression="get-property('SYSTEM_TIME')" /> <filter source="$ctx:AM_KEY_TYPE" regex="PRODUCTION"> <then> <send> <endpoint name="admin--test_APIproductionEndpoint_1"> <http uri-template="jms:/SMSStore?transport.jms.ConnectionFactory=QueueConnectionFactoryAPIM&transport.jms.ReplyDestination=SMSReceiveNotificationStore"> <timeout> <duration>60000</duration> <responseAction>fault</responseAction> </timeout> </http> <property name="ENDPOINT_ADDRESS" value="jms:/SMSStore?transport.jms.ConnectionFactory=QueueConnectionFactoryAPIM&transport.jms.ReplyDestination=SMSReceiveNotificationStore" /> </endpoint> </send> </then> <else> <sequence key="_sandbox_key_error_" /> </else> </filter> </inSequence> <outSequence> <class name="org.wso2.carbon.apimgt.gateway.handlers.analytics.APIMgtResponseHandler" /> <send /> </outSequence> </resource> |

Configure and setup ESB

1) Configure JMSListener and JMSSender in ESB - axis2.xml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 | <transportReceiver name="jms" class="org.apache.axis2.transport.jms.JMSListener"> <parameter locked="false" name="QueueConnectionFactoryESB"> <parameter locked="false" name="java.naming.factory.initial">com.tibco.tibjms.naming.TibjmsInitialContextFactory</parameter> <parameter name="java.naming.provider.url">tcp://tibco.server.host.one:7222,tcp://tibco.server.host.two:7222</parameter> <parameter locked="false" name="transport.jms.ConnectionFactoryJNDIName">QueueConnectionFactoryESB</parameter> <parameter locked="false" name="transport.jms.JMSSpecVersion">1.0.2b</parameter> <parameter locked="false" name="transport.jms.ConnectionFactoryType">queue</parameter> <parameter locked="false" name="transport.jms.UserName">esbuser</parameter> <parameter locked="false" name="transport.jms.Password">12345</parameter> <parameter name="transport.jms.MaxJMSConnections">5</parameter> </parameter> </transportReceiver> <transportSender name="jms" class="org.apache.axis2.transport.jms.JMSSender"> <parameter locked="false" name="QueueConnectionFactoryESB"> <parameter locked="false" name="java.naming.factory.initial">com.tibco.tibjms.naming.TibjmsInitialContextFactory</parameter> <parameter name="java.naming.provider.url">tcp://tibco.server.host.one:7222,tcp://tibco.server.host.two:7222</parameter> <parameter locked="false" name="transport.jms.ConnectionFactoryJNDIName">QueueConnectionFactoryESB</parameter> <parameter locked="false" name="transport.jms.JMSSpecVersion">1.0.2b</parameter> <parameter locked="false" name="transport.jms.ConnectionFactoryType">queue</parameter> <parameter name="transport.jms.DefaultReplyDestinationType" locked="true">queue</parameter> <parameter name="transport.jms.DestinationType" locked="true">queue</parameter> <parameter locked="false" name="transport.jms.UserName">esbuser</parameter> <parameter locked="false" name="transport.jms.Password">12345</parameter> <parameter name="transport.jms.MaxJMSConnections">5</parameter> <parameter locked="false" name="transport.jms.CacheLevel">connection</parameter> </parameter> </transportSender> |

Above are all the configurations required for this setup.

2) Other than that, copy below Tibco JMS client related jars into <ESB_HOME>/repository/components/extensions/ directory

- jms-2.0.jar

- tibemsd_sec.jar

- tibjms.jar

- tibjmsadmin.jar

- tibjmsapps.jar

- tibjmsufo.jar

- tibrvjms.jar

You can get them from the tibco installation directory.

i.e. /home/samithac/tibco/ems/8.4/lib

3) Deploy the Proxy service required in ESB. For that copy the below SMSForwardProxy.xml into <ESB_HOME>/repository/deployment/server/synapse-configs/default/proxy-services

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 | <?xml version="1.0" encoding="UTF-8"?> <proxy xmlns="http://ws.apache.org/ns/synapse" name="SMSForwardProxy" transports="jms" startOnLoad="true"> <description /> <target> <inSequence> <send> <endpoint> <address uri="http://localhost:9000/services/SimpleStockQuoteService" /> </endpoint> </send> </inSequence> <outSequence> <send /> </outSequence> </target> <parameter name="transport.jms.DestinationType">queue</parameter> <!-- listnening to this queue--> <parameter name="transport.jms.Destination">SMSStore</parameter> <parameter name="transport.jms.ContentType"> <rules xmlns=""> <jmsProperty>contentType</jmsProperty> <default>text/xml</default> </rules> </parameter> <parameter name="transport.jms.ConnectionFactory">QueueConnectionFactoryESB</parameter> </proxy> |

I will post another blog post to describe how wso2 ESB Proxy works..be patient :-)

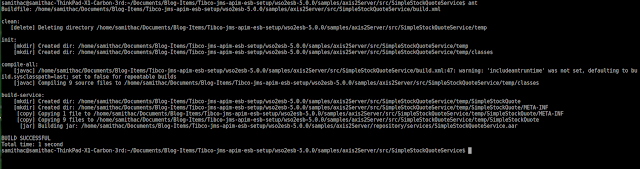

4) Deploy sample backend service (SimpleStockQuoteService) in to the Axis2 server. Open a command prompt (or a shell in Linux) and go to the required sample folder.

<ESB_HOME>/samples/axis2Server/src/SecureStockQuoteService . Then give the 'ant' command to build the sample and deploy.

5) Then start the axis2server within WSO2 ESB. For that, go o to <ESB_HOME>/samples/axis2Server/ directory and give run the axis2server.sh script to start the server.

Configure and setup Tibco EMS JMS server

1) Go to the tibco installation directory's bin directory and start the Server using below command../tibemsd64 -config ~/TIBCO_HOME/tibco/cfgmgmt/ems/data/tibemsd.conf

This startup command will load the configurations from ~/TIBCO_HOME/tibco/cfgmgmt/ems/data/tibemsd.conf

You can use the TIBCO Enterprise Message Service Administration Tool to manage/view the connections, factories, etc. You can start it with ./tibemsadmin64 command.2) Create 2 queues in JMS server I have created two queues with below names.SMSStore- Queue into which the APIM send the JMS message. ESB server also consumes JMS messages from this queue.SMSReceiveNotificationStore- Queue into which the ESB sends the response JMS message.

Sample command:create queue SMSReceiveNotificationStore

3) Create 2 ConnectionFactories in JMS server

I have below two JMS connection factories been created already.- QueueConnectionFactoryAPIM

- QueueConnectionFactoryESB

Sample command:create factory QueueConnectionFactoryAPIM queue url=tcp://localhost:7222 reconnect_attempt_delay=100000 reconnect_attempt_count=3

You can see the Connection Factories been created in /home/samithac/TIBCO_HOME/tibco/cfgmgmt/ems/data/factories.conf file as below. You can create the Connection Factories by manually adding into the above file too.

[QueueConnectionFactoryESB]

type = queue

url = tcp://tibco.server.host.one:7222

connect_attempt_count = 3

connect_attempt_delay = 15000

connect_attempt_timeout = 10000

reconnect_attempt_count = 1

reconnect_attempt_delay = 1

reconnect_attempt_timeout = 5000

[QueueConnectionFactoryAPIM]

type = queue

url = tcp://tibco.server.host.one:7222

connect_attempt_count = 3

connect_attempt_delay = 15000

connect_attempt_timeout = 10000

reconnect_attempt_count = 1

reconnect_attempt_delay = 1

reconnect_attempt_timeout = 5000

Ok. Now you are ready. Tou can invoke the API and get a response back. Below is how I invokded the API via cURL client and got the expected response back. The payload used is saved in a xml file named payload.xml and placed at the directory where the curl command is given.

1 2 3 4 5 6 7 8 9 10 11 12 | <soapenv:Envelope xmlns:soapenv="http://schemas.xmlsoap.org/soap/envelope/" xmlns:ser="http://services.samples" xmlns:xsd="http://services.samples/xsd"> <soapenv:Header/> <soapenv:Body> <ser:getQuote> <!--Optional:--> <ser:request> <!--Optional:--> <xsd:symbol>message</xsd:symbol> </ser:request> </ser:getQuote> </soapenv:Body> </soapenv:Envelope> |

Sample cURL command:

curl -X POST --header 'Content-Type: application/xml' --header 'Accept: application/xml' --header 'Authorization: Bearer 9b17298d-6404-338e-9036-271bb7a239f3' -d @payload.xml 'https://10.100.7.124:8243/test/1.0.0/b' -k

That's it. Cheers...!

References:

http://blog.samisa.org/2014/01/jms-usecases-tutorial-with-wso2-esb.html

https://docs.wso2.com/display/ESB500/ESB+as+Both+a+JMS+Producer+and+Consumer